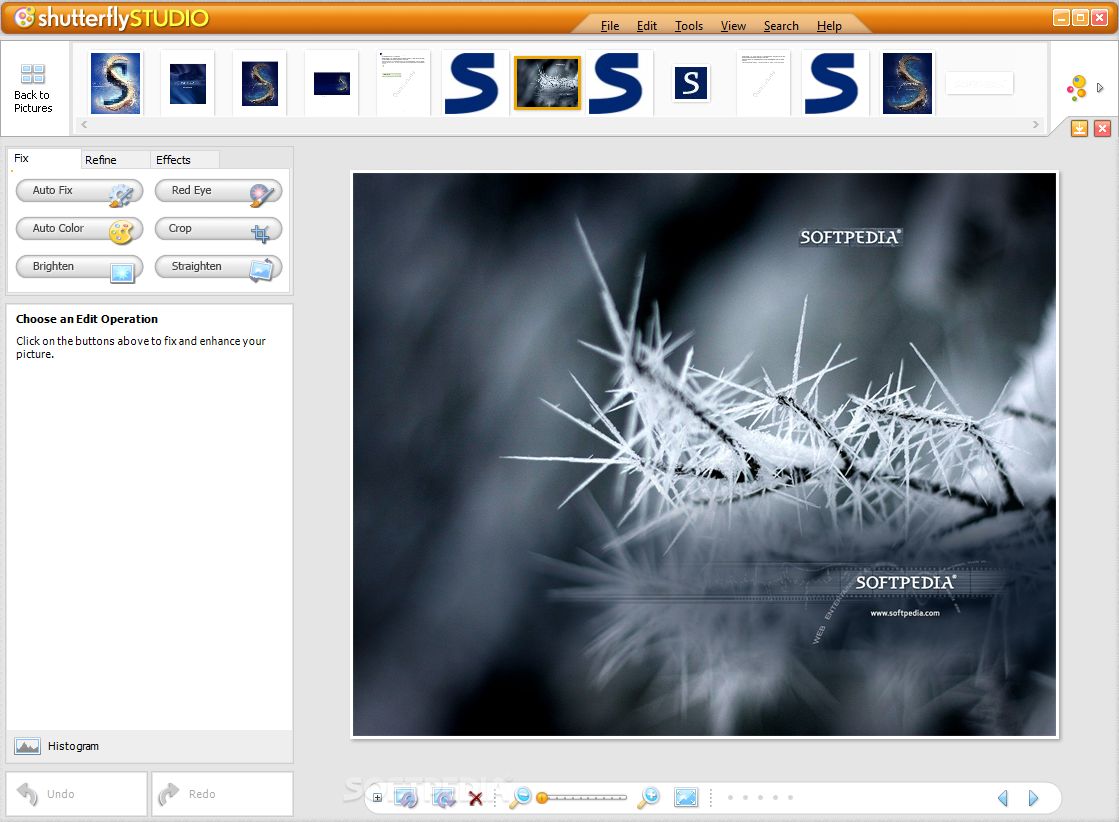

People’s overall design has a crisper, bolder look, so that names are more easily readable. This time around, Apple got whatever right they needed to. That would put that kind of information in a place where it was at greater risk of being extracted or even subpoenaed. You’ll note that Apple didn’t and still doesn’t sync this new algorithm-based People album to. My assumption was that before release, Apple found a flaw either in the way they merged dissimilar sets of people on different devices or in the privacy approach it took. It never happened, and Apple never answered questions about it or explained itself. People finally syncs, and it’s better, tooĪpple promised a year ago, before the release of its revamped facial-identification feature, that “People are synced among devices where you’re signed in with the same Apple ID.” That line appeared in the iOS 10 manual, and the company had made other assurances. Selective color, now built into Photos, lets you replace color families within a photo without making other changes (left, original right, modified to accentuate changes). When saved and closed from that external app, those edits become stored as a non-destructive layer in Photos, so you have the original and the revised version. Photos also now supports editing images in external photo-editing apps by selecting from an Edit With menu. A couple of more advanced features appear in the Adjust menu, too: curves, for a different and richer way to re-map the appearance of ranges of color in an image and selective color, which you can use to swap out a specific color range in an image with an entirely different hue, saturation, and luminance.Ī toolbar now appears in all Photos views, not just edited, that includes a rotation button (hold down Option to toggle it from counterclockwise to clockwise) and Auto Enhance. The whole editing interface offers significant UI improvements, too, grouping tasks into tabs at the top (Adjust, Filters, and Crop) and letting you turn on and off the depth effect in two-camera photos. Looking through all my Live Photos, I didn’t find many that worked, but I will likely now enable Live Photos for specific shots that I want to extract as long exposures. With a still shot that has movement within the frame, you can get a lovely artistic image that captures the feel of movement. And you can’t (yet) convert your regular videos in full or as clips to apply these features to.Ī final option, Long Exposure, has a lot of promise. Unfortunately, you can’t select which portion gets these effects-it seems like an algorithm-driven choice. And you can trim out unwanted parts of the clip.īut Apple added transformative features common in Instagram and other photo apps: Loop, which turns a subset of the live portion into a continuous cycle and Bounce, loops in a sequence of forward to the end and then backwards to the beginning.

You can turn the feature off, as before, and mute its audio, but you can also select a new “key photo,” or the image displayed when the photo is at rest. Live Photos can be transformed into loops, bounces, and long exposures.Ĭlick Edit with a Live Photo selected, and the Adjust tab of the editing window shows Live Photos-specific tools at the bottom. Photos 3 finally lets you create new kinds of results-not just in iOS (where the feature is somewhat hidden), but in macOS as well.

While Apple improved some aspects of Live Photos in iOS 10, such as adding image stabilization, they remained a Harry Potterish gimmick. (iOS 11 seems to have upped the frame rate on those, making them smoother.) Live Photos seemed like a clever idea in search of a reason. I confess I never much liked Live Photos, because there wasn’t much you could do with them, except play them back with the random before/after videos. Live Photos get a purpose (and editing gets better) Fluffy Friends over the Years shows every dog and cat I’ve apparently ever photographed, using the scene analysis algorithms that also let you search by keywords like dog and cat. Photos 3 shows every previous import operation, organized by date.Īnd Apple has clearly seeded smart ideas into the mix. On average, they seem less bizarre, and less like a rogue robot assembled them. The improved Memories feature shows what seems to be a better selection of photos, probably by working harder to find common sets of faces and perform sentiment analysis to find smiles and people looking at the camera. Let’s just say it could be fairly haphazard in the previous version, producing some howlers in terms of odd photos chosen-sometimes blurry or nearly empty-and not quite getting what might have made that period significant. Introduced last year, Photos’ Memories feature collects photos around a location, a time or holiday, or a theme determined in part by machine learning, which also then highlights the photos in that assembled set that it thinks are most representative. Photos’ Memories look like human memories

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed